Free Report

Veeam is a Four-Time Leader and Outperformer

See why Veeam’s Kubernetes data protection solution outranks competitors

- Kasten K10 Use Cases

- Ransomware Protection

Ransomware Protection

Kubernetes Native Ransomware Protection Solution

- Ensure data protection

- Provide isolation from ransomware attacks

- Deliver point-in-time recovery

Complete Application and Data Protection Against Cyberattacks

Early Threat Detection

Raise an early flag on potentially malicious activity or imminent attacks.

Encrypted and Immutable Backups

Always have a safe and consistent copy of your application and data.

Accelerated Recovery

Minimize business downtime due to attacks with quick and secure restores.

The Reality of Ransomware Attacks on Organizations

Harness the Power of Kasten K10 to Safeguard Data

Data Protection

Empower users by facilitating the seamless creation and scheduling of routing backups for applications and their associated persistent data volumes. Backups are insulated from primary storage and can be used to restore operations quickly.

Point-in-Time Recovery

Recover your data from specific points in time, including situations when rolling back to a known state of operations is necessary. In the case of a ransomware disaster scenario, point-in-time recovery is essential.

Isolation From Ransomware

Isolate your production and backup environments to ensure operations can be restored from a clean and reliable backup in the event of a security incident. Key information can be secured to address a specific restore scenario.

Application Mobility

Enable graceful migration of an application and its data across Kubernetes clusters, including to a separate product resource if a previously live platform is compromised.

Use Cases for Kasten K10

Customers Protected by Veeam

FAQs

How does Kasten K10 mitigate ransomware risk in Kubernetes?

What makes Kubernetes environments susceptible to attacks?

How does Kasten K10 protect Kubernetes data?

Can Kasten K10 detect ransomware in Kubernetes backups?

Radical Resilience Starts Here

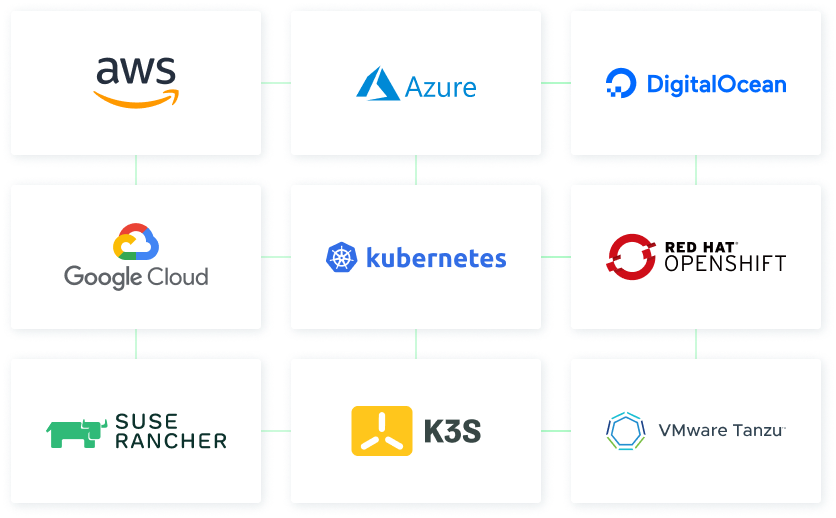

hybrid cloud and the confidence you need for long-term success.